A feminist reframing for how we design chat agents

on personality, emotional labor, and the gendered scripts we keep scaling

The Body Copy is weekly-ish newsletter where I try to keep my feminist marbles as I talk about AI, language, and design. For the most part I try to be calm and reflective, but other times I’ve had too much coffee and not enough snacks and I have THOUGHTS. Either way, I’m here to poke at things. If you like what you read, feel free to share. If you hate it, consider sharing it anyways. As always, thanks for being here.

99.9% of my day consists of me thinking about whether I ate enough protein (I didn’t). The other 0.1% is me searching for rodent memes I can caption and send to my friends.

Case in point:

The reason I obsess over how awful whey tastes or how many photos of mice getting haircuts exist on the Internet is straightforward: personality.

I like to think of myself as someone who’s jacked. As someone who appreciates the fine and exquisite artistic intricasies of photo montage.

And in my work as a conversation designer, this idea of personality has typically shown up as how do we want our conversational agent (a fancy word for chatbot) to sound? Agreeable? Warm? Patient? Helpful?

It for sure has to always be available. Always ready to comfort. Always ready to care.

But is asking what traits do we want our agent to have? actually a thoughtful design question? Or are these questions just describing and reinforcing what we’ve always expected from women? (The good, reputable, virtuous women, mind you. The other kinds? Ew.)

What I’m reading and using as my poking stick today:

Who does the conversational agent get to be?

Before I dive into what I find so incredibly prickly about making chatbots sound warm, bubbly, and always super helpful, some terms for clarity and shared meaning:

Personality. In the UX and conversation design world, this is typically defined as a set of qualities or behavioral traits. So, things like tone, register, response patterns. What words it uses to explain something. How it responds when it doesn’t know. How it deals with idiotic questions like “are men better than women.”

Identity. Slightly different. Philosophically, it’s seen as a stable, continuous sense of self. Something made, constructed over time through experience, relation, and context. It’s a way of being in the world. It’s built, lived. It requires a body, a history, a before and after. (A language model has none of that yet?)

From a feminist perspective, identities are made unevenly. They are built within systems that have always privileged certain kinds of selves over others.

Performance. In Judith Butler’s Gender Trouble (1990), performance (specifically gender performance) is what you do over and over again, so much and so many times that it looks like it’s what you are (it’s not).

Let the beef begin.

And here, the gap that’s interesting to me. Chat agents or conversational interfaces are sold as having personality or identity. But do they? That would require a sense of self, a kind of personhood.

What if instead what they have are performances? Performances of feminized care work? Systems of emotional labor?

What chat agents and flight attendants have in common

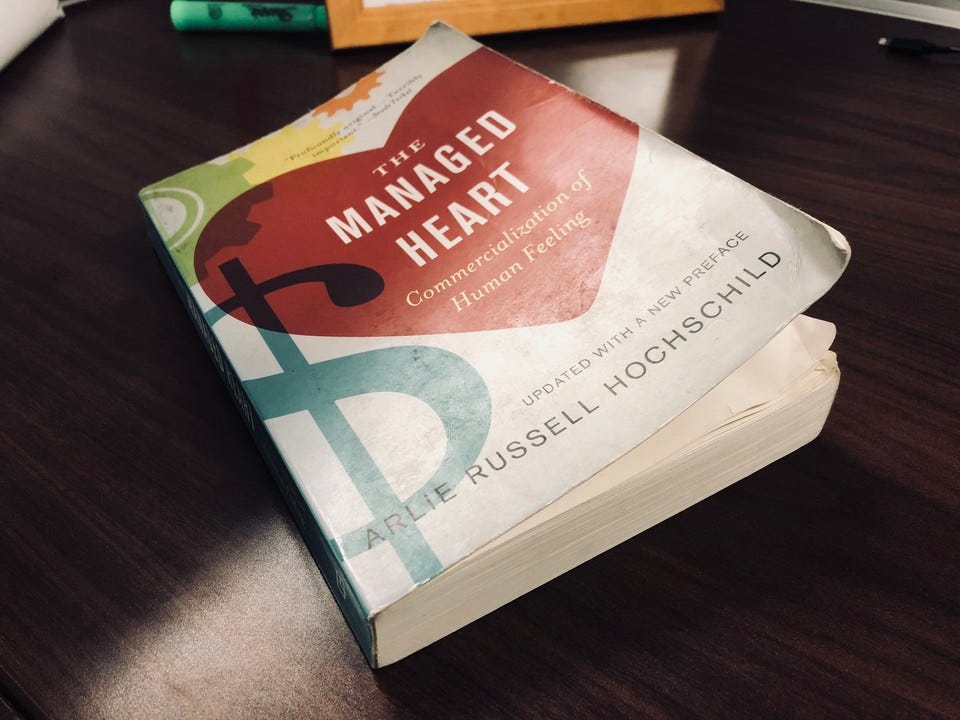

In The Managed Heart (1983), Arlie Russell Hochschild looks at the work of flight attendants. What she found was that their job goes beyond serving drinks or keeping passengers safe. Their jobs require managing feelings. Creating calm. Absorbing anxiety. Generating trust. Flight attendants are meant to create an emotional experience for others.

Hochschild calls this “emotional labor,” or the work of managing or regulating someone else’s feelings. Which is basically what ChatGPT is doing every day all day for the vast majority of us.

One of my many beefs (besides this being deeply gendered) is that these conversational interfaces are making this labor disappear.

Because there’s no one to point to, no body performing the task, no one who’s grouchy or just tired, all there is is an interface that’s always there and always ready to smile. So we no longer see it as labor. Instead, we call it personality. And this becomes a brand decision or a stylistic preference. A layer you can tweak without any real awareness of the implications.

What we call “personality” is actually a system for producing and regulating emotion at scale.

We script how being knowledgeable sounds. How care sounds. How disagreement sounds (if it gets to sound it out at all.) We decide how much space this voice is allowed to take up.

And holy cheese sticks. That “friendly” and “warm” vibe is anything but neutral.

The people we allow the chat agents to sound like are mostly, yup. Women. The gendered construction of women.

It’s how we’ve been told to be. To soothe, accommodate, make life easier for others. So when we design chat agent personalities that re-enact that performance, we’re reproducing that mental model. We’re telling the world how we think feminized characters should behave and feel. What they’re allowed to feel (only good things), and how they’re allowed to express it (only nicely). The chatbot performance encodes all of that.

Are the guidelines we give our chat agent the equivalent of telling a woman to “smile”?

There’s a lot of literature already on the problematic feminization of chatbots and virtual assistants. (“Digital domesticity” is a good rabbit hole to go down).

The fascinating thing for me is that this feminization has real effects. People disclose more, trust more, and feel more depending on how “human” or personable the system seems.

So an agent’s personality is more than just a cosmetic feature, really. It actually alters interaction outcomes. Which isn’t at all surprising. It makes sense we’d open up more or do more things when the space feels safe and fuzzy and comfy.

What sounds like a personality is actually a design constraint

Sara Ahmed’s work on the politics of happiness (The Promise of Happiness, 2010) speaks to the pressure we (often women) feel to keep others comfortable, to be the instrument of someone else’s good vibes. She theorizes this a form of political constraint.

The agreeable woman! The tireless assistant! The chat agent that makes everything pleasant!

We end up creating this expectation that this is just how things should be, that this is good design. That any deviation from it is a glitch or bad UX. Saying no, taking too long, or being unavailable is the equivalent of bad design.

Together, Butler and Ahmed help me understand this:

Our chat’s personality is a system of constraints, a narrow lane of what’s acceptable behavior, fine tuned to keep the interaction stable and the user comfortable. BUT. The comfort we’re prioritizing belongs to someone. It was designed for someone. The question is…who?

So what if we stopped calling it personality?

What if instead we called it an emotional labor system?

And what if then asked: What and whose emotional labor are we replicating, rebranding, and scaling?

Whose emotional range are we excluding? Whose voice are we avoiding and making invisible? Why?

If we stopped telling the chat agent to smile, what would it sound like?

Some ideas.

It doesn’t pretend to be neutral. It recognizies its perspective, biases, limits, and context.

It has boundaries and is vocal about them. It doesnt always say yes. It refuses. It does not endlessly accommodate. Politeness is not mandatory.

It doesn’t overperform emotional labor. No excessive apologizing. No constant soothing or acquiescing.

It allows friction. It lets users get frustrated. Not everything is smoothed into something pleasant. It holds space for things to break or not work.

It shares where its knowledge comes from. What’s uncertain or contested. It doesnt hide its context. It doesn’t present answers as universal.

It’s not a flattened voice. It makes space for multiple voices. “There are a few ways to interpret this. One perspective says x, another says y.”

To recap

My hot take is that chat agents don’t have personalities. They have performances or emotional displays. And these are 100000% political artifacts. Shaped by power and assumptions about whose and what performance is appropriate.

Chat agents do a shit ton of emotional labor, which has been historically demanded from women and feminized workers, with different expectations placed on different bodies.

AI systems automate and scale this labor. The invisibility of the machine makes it look like the labor has disappeared.

Which is a slippery slope, because it normalizes feminized care as the default mode of human-computer interaction.

Conversation design is never neutral. It’s a place where gendered labor structures get reproduced, optimized, and shipped. Usually without anyone in the room naming it as such.

That felt good to get out. Marbles still kept, for now.

Thanks for being here. As my parting gift, here’s another mouse getting a haircut.

May the fierce feminist goddesses of the divine realms guide you this week.

Onward and upward,

Helena

References

Ahmed, Sara. The Promise of Happiness. Duke University Press, 2010.

Butler, Judith. Gender Trouble: Feminism and the Subversion of Identity. Routledge, 1990.

Hochschild, Arlie Russell. The Managed Heart: Commercialization of Human Feeling. University of California Press, 1983.